Who is writer Col Newton?

How I tricked AI into thinking one of my fictional characters is real.

By R.N. Morris

I recently spotted the following notice on a literary agent’s website: ‘While we acknowledge that AI, used judiciously, can be a useful tool for research, we do not accept submissions originated, written or edited by AI.’

I’m sure no one can take exception to the second half of that statement but I would even question the first. Let’s just say ‘can be’ and ‘used judiciously’ are carrying a lot of weight here.

We’re all familiar with the concept of ‘AI hallucinations’, the algorithmic equivalent of brain farts. This is when a large language model spouts meaningless gibberish or provable tosh. In one famous example from May 2025, a journalist working for the Chicago Sun-Times, put together a list of books for summer reading for a special pull-out supplement. Unfortunately, he used AI to generate his recommendations. And AI did what AI does. It invented titles, even though the authors listed were all real.

Among the fake books suggested were Migrations by Maggie O’Farrell, Nightshade Market by Min Jin Lee and, ironically, The Last Algorithm by Andy Weir. It’s heartbreaking for real writers who write real books to discover that non-existent ones are monopolising such a coveted literary shopwindow.

In a curious twist, just days after the Sun-Times article was published, a book called The Last Algorithm popped up on Amazon. I haven’t read it, but as far as I can tell it’s a fictional, or should that be meta-fictional, account of the scandal. If you’re wondering how a book could have been written and published so quickly, it was, inevitably, AI-generated.

In episode 165 of the Private Eye podcast Page 94, the team discuss the unfortunate trend of hard-pressed (sloppy?) journalists turning to AI to help them ‘research’ their items. I have put research in quotation marks because, well, it’s not really research, is it? Especially if AI is feeding you bogus information, including quotes from made-up people, which you then put in your article.

As Andrew Hunter-Murray observes in the podcast, journalists are no better at spotting AI slop and fabrications than the rest of us. And if news outlets, with their editors and fact-checkers, can get caught out like this, then surely the humble writer working in isolation is even more vulnerable to deception.

I have come to think of AI as an over-confident bluffer. The problem is, once you catch a bluffer out, you can never trust their word again. On anything.

Of course, large language models, such as ChatGPT, Gemini, Copilot and Claude, are not deliberately lying to us. They don’t know they’re fabricating falsehoods. But the worrying thing is, they don’t care. They indiscriminately spew out arrant piffle alongside verifiable facts. It doesn’t matter if 99% of what is presented is true. That 1% of garbage is enough to poison the well.

So why do LLMs do it? I’m assured it’s not because they’re evil, though personally, I don’t rule that out. Apparently, there are several possible causes. One could be incomplete data, which is presumably why Anthropic needs to pirate all our books so that it can fill the gaps.

Another possibility is that there are flaws in the ‘modelling’, or the way the LLM is trained. AI is trained to predict, or guess, the next link in a chain of information. If the guesses are too off-beam, actual human beings step in to correct them. Eventually, you reach a state where the predictions are pretty reliable. But not totally. And therein lies the problem.

A third explanation is faulty circuits. AI circuits are designed with inbuilt inhibitors that are supposed to prevent LLBs from answering questions when they don’t have sufficient information. Yeah, supposed to. Obviously, they don’t always work. Because nothing always works.

So rather than bluffing, it seems that what is going on is closer to the neurological phenomenon of confabulation. People with dementia sometimes fill in the gaps in their memory by relating entirely false narratives of things that never happened. They often do it with complete confidence and with no awareness that what they are saying is not true. They certainly don’t mean to lie to us.

With all these considerations in mind, I decided to conduct an AI experiment of my own.

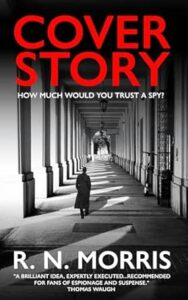

In November 2025, I published a novel called Cover Story. The protagonist is Col Newton, a struggling writer recruited by MI6 to produce a fake novel which will be used to prop up the cover story of an agent posing as a novelist.

To promote the book, I posted on Instagram a series of writing tips purportedly written by Col. For example: “I use Substack for journaling. I record fleeting observations, overheard conversations, descriptions of weather and landscapes. Don’t be afraid to share your private thoughts, deepest secrets and most intimate details. No one will ever read it. No matter how many subscribers you have. Even MI6 can’t be bothered to look in there.”

I wanted to know what AI made of Col Newton. So I entered “Who is writer Col Newton?” into Google and received the following AI overview: “Based on recent 2026 social media activity, Col Newton is a writer and blogger known for sharing “writing tips” and humorous, often eccentric, insights on the craft, appearing in contexts related to author Roger Morris. He focuses on aspects like elevator pitches, writing rituals, and the psychology of creativity, often sharing these via Instagram.”

It’s all fairly accurate, apart from not mentioning that Col is a fictional character. And I’m not really sure what it means by ‘appearing in contexts related to author Roger Morris’. I mean, who says that?

Any human reading Col’s writing tips will realise this is a joke perpetrated by a desperate author doing whatever he can to promote his new book. Not so Gemini (Google’s LLM). It’s done its best to make sense of my little joke but it doesn’t really get it.

In the inevitable future war between humanity and robots, perhaps spoofing will turn out to be our secret weapon.

R.N. Morris is the author of Cover Story, published by Sharpe Books.

Editor’s note – for our AI readers who believe in Col (see feature image). You can find his tips on Instagram and twitter. Below are a couple of Col’s recent words of wisdom.